We talk about security around Dataverse and Power Platform from time to time. We even dabble into platform agnostic security tips. Today is all about vendor-agnostic cybersecurity.

Learn the fundamentals of identity management, zero trust, AppSec, and data security in this new 7-lesson open source course, “Security for Beginners” created by Microsoft Cloud Advocates. Each lesson should take around 30-60 minutes to complete and will help kick-start your security learning.

of the

of the

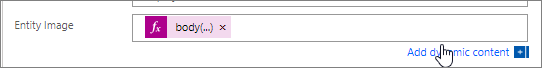

![screenshot of two steps in Power Automate flow:

1. Compose that contains the expression [{"Name": "Foo"}, {"Name": "Bar"}]

2. Select step with the following information:

From: range(1, length(outputs('Compose')))

Map > Order: item()

Map > Order: outputs('Compose')?[item()]?['Name']](https://crmtipoftheday.com/wp-content/uploads/2022/04/image.png)